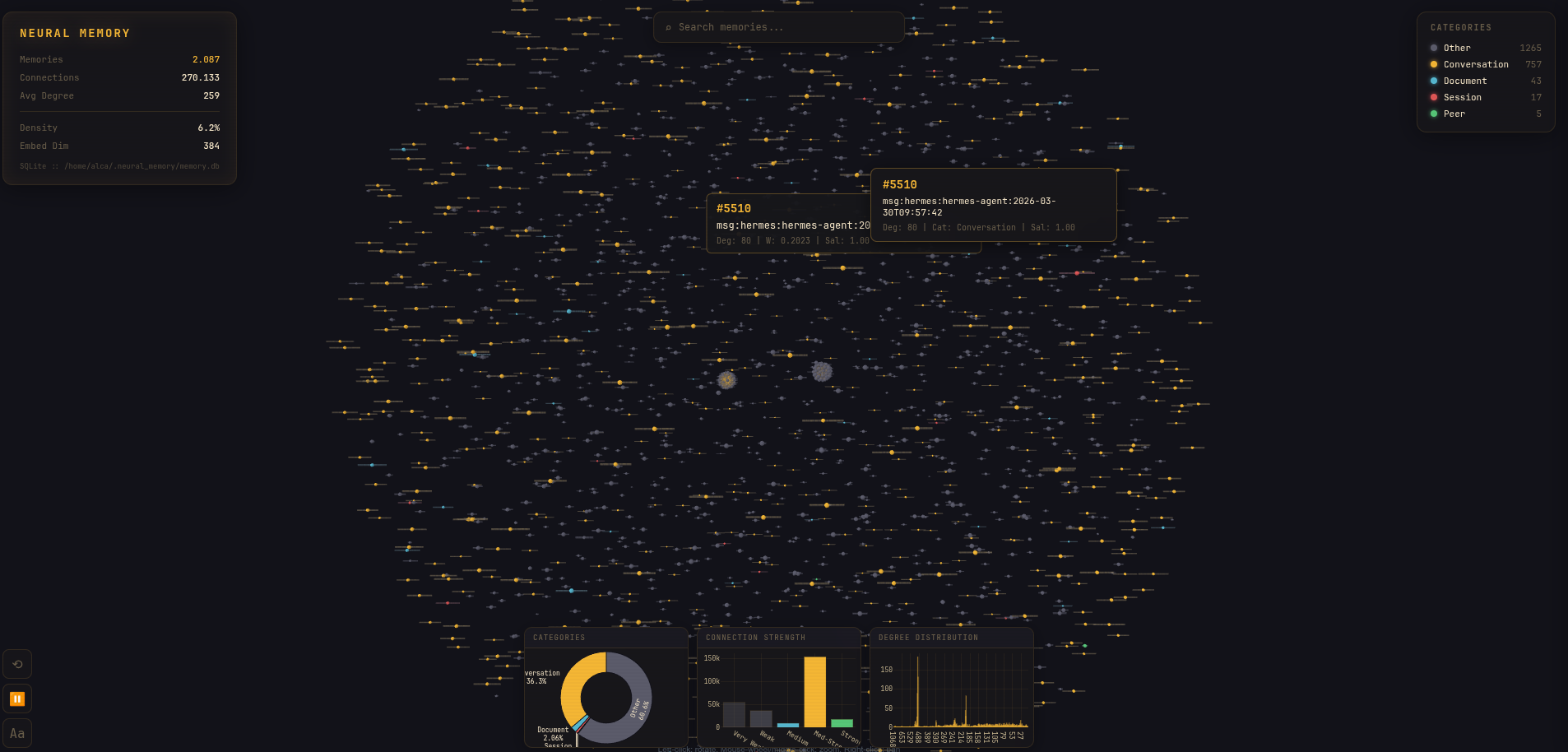

Core Architecture

FastEmbed (ONNX)

Primary embedding backend. intfloat/multilingual-e5-large — 1024 dimensions.

ONNX runtime, no PyTorch dependency. ~50ms per embedding on CPU.

Falls back through sentence-transformers → tfidf → hash.

Knowledge Graph

Automatic connection creation via cosine similarity threshold.

BFS spreading activation with decay factor.

Edge types: semantic, bridge (from Dream REM), temporal.

SQLite connections table with unique constraint.

Dream Engine

Autonomous background consolidation inspired by biological sleep. NREM: replay & strengthen/weaken connections. REM: bridge discovery between isolated memories. Insight: community detection via BFS connected components.